Suppose we have a general optimization problem.

Also, suppose problem (1) has a minimum and the minimum can be achieved by a unique minimizer  .

.

Now if I have a point  such that

such that  is very small, then how small is the distance

is very small, then how small is the distance  . We might expect that

. We might expect that  will imply that

will imply that  . This is true if

. This is true if  is compact and

is compact and  is continuous. But this does not tell what is the quantitative relationship between the optimal gap, i.e.,

is continuous. But this does not tell what is the quantitative relationship between the optimal gap, i.e.,  , and the distance to the solution, i.e.,

, and the distance to the solution, i.e.,  .

.

In this post, I am going to show that for linear programming (LP), the optimal gap and distance to solutions are the same up to a multiplicative constant which only depends on the problem data.

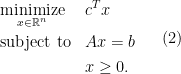

To start, consider an LP in the standard form, i.e.,

where the decision variable is  and problem data are

and problem data are  .

.  means each coordinate of

means each coordinate of  is nonnegative.

is nonnegative.

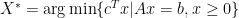

Denote the solution set of problem (1) to be  , and the distance to the solution set

, and the distance to the solution set  to be

to be  . Note that the norm here is arbitrary, not necessarily the Euclidean norm.

. Note that the norm here is arbitrary, not necessarily the Euclidean norm.

We are now ready to state the theorem.

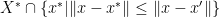

Theorem 1 (Sandwich inequality of optimal gap and distance to solutions of LP) For problem (1), theres exist constants  which depends only on

which depends only on  and

and  such that for all feasible

such that for all feasible  , i.e.,

, i.e.,  ,

,

The above theorem shows that the role of optimal gap, i.e.,  , and the distance to the solution set, i.e.

, and the distance to the solution set, i.e.  , are the same up to a multiplicative constant. The right inequality of the theorem is usually referred to as linear growth in the optimization literature.

, are the same up to a multiplicative constant. The right inequality of the theorem is usually referred to as linear growth in the optimization literature.

The proof below is constructive and we can in fact take  where

where  and

and  for

for  and

and  . Here

. Here  is the dual norm and

is the dual norm and  are extreme points and extreme rays. We assume there are

are extreme points and extreme rays. We assume there are  many extreme points and

many extreme points and  many extreme rays with first

many extreme rays with first  s and first

s and first  are in the optimal set

are in the optimal set  . See the proof for more detail.

. See the proof for more detail.

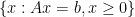

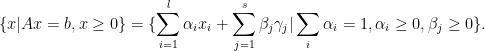

The idea of the proof mainly relies on the extreme point and extreme rays representation of the feasible region and the optimal set,i.e.,  and

and  .

.

Proof: The feasible region can be written as

Here  s are extreme points of the feasible region and

s are extreme points of the feasible region and  s are extreme rays. By scaling the extreme rays, we can assume that

s are extreme rays. By scaling the extreme rays, we can assume that  for all

for all  .

.

The optimal set can also be written as

We assume here the first  many

many  and

and  many

many  are in the optimal set and the rest of

are in the optimal set and the rest of  and

and  s are not for notation simplicity.

s are not for notation simplicity.

We denote  and

and  where

where  . Note

. Note  since the

since the  s not in the optimal set should have inner product with

s not in the optimal set should have inner product with  to be positive.

to be positive.

We first prove the second inquality, i.e.,  .

.

Now take an arbitrary feasible  , it can be written as

, it can be written as

for some  and

and  .

.

The objective value of  is then

is then

We use the fact that  for all

for all  here.

here.

Subtract the above by  . We have

. We have

The second equality is due to  and the inequality is because of the definition of

and the inequality is because of the definition of  and the

and the  s are positive.

s are positive.

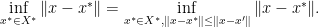

The distance between  and

and  is the infimum of

is the infimum of

By taking  with

with  and

and  and apply triangular inequality to the above quantity, we have

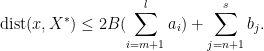

and apply triangular inequality to the above quantity, we have

The first inequality is the triangular inequality and  . The second inequality is applying the definition of

. The second inequality is applying the definition of  and

and  . The first equality is due to

. The first equality is due to  and the second equality is due to

and the second equality is due to  .

.

Thus the distance between  and

and  is bounded above by

is bounded above by

Since  by our previous argument, we see that setting

by our previous argument, we see that setting

should give us

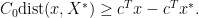

We now prove the inequality

Note that the infimum in  is actually achieved by some

is actually achieved by some  . The reason is that we can first pick a

. The reason is that we can first pick a  , then

, then

But the set  is actually bounded and closed (

is actually bounded and closed ( is closed as it is a convex combination of finite points plus a conic combination of extreme vectors), thus Weierstrass theorems tells us that the infimum is actually achieved by some

is closed as it is a convex combination of finite points plus a conic combination of extreme vectors), thus Weierstrass theorems tells us that the infimum is actually achieved by some  .

.

Now take  such that

such that  . We have

. We have

where  is the dual norm of

is the dual norm of  . Thus letting

. Thus letting  finishes the proof.

finishes the proof.

From the proof, we see that two possible choice of  and

and  are

are  where

where  and

and  for

for  and

and  . These are not optimal and can be sharpened. I probably will give a sharper constant in a future post.

. These are not optimal and can be sharpened. I probably will give a sharper constant in a future post.

, if

, i.e.,

is nonnegative as well, is there any relation with their singular values?

, denoted as

, is larger than the largest singular value of

,

:

are symmetric, so that

,

. Here for any symmetric matrix

, we denote its eigenvalues as

.

are all nonnegative and symmetric, then

corresponding to the eigenvalue

, which is both nonnegative and largest in magnitude. Next, by multiplying left and right of

by

and

respectively, we have

is because

is nonnegative and

is nonnegative.

is because

has unit norm and

. The step

is because

. The step

is because

are symmetric and both are nonnegative so largest eigenvalue is indeed just the singular value due to Perron-Frobenius theorem.

are all nonnegative, then

and

in

:

has the largest singular value as

and

has the largest singular value as

.

, we also have

. Using Lemma 1, we prove the theorem.

and

? We don’t have

in this case by considering